The Australian media artist Daniel Crooks had the goal, during his artist-in-residence program at the Ars Electronica Futurelab, to overcome the limits of the screen and generate physical three-dimensional sculptures from it. Whether he has succeeded, he says, together with Otto Naderer, in this interview.

In spring, the Australian media artist Daniel Crooks took part in an artist-in-residence program at the Ars Electronica Futurelab to do research on his project “Real Imaginary Objects”. We caught up with him and Otto Naderer, member of the Ars Electronica Futurelab and person in charge of this project, to talk about the development of this exciting project.

In spring, the Australian media artist Daniel Crooks took part in an artist-in-residence program at the Ars Electronica Futurelab to do research on his project “Real Imaginary Objects”. We caught up with him and Otto Naderer, member of the Ars Electronica Futurelab and person in charge of this project, to talk about the development of this exciting project.

Daniel, what is involved in this project?

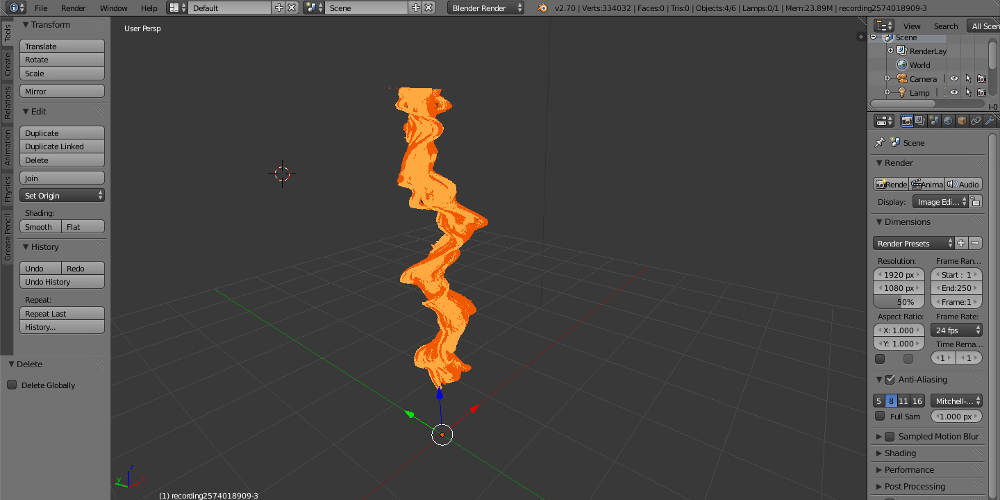

Daniel Crooks: The central methodology in my video practice is the explicit treatment of time as a physical material. At the Futurelab I aim to extend this research beyond the bounds of the video screen into physical three-dimensional sculptures. The main objective of the project is to develop a new kind of camera, a 3D slicing camera, able to capture a sequence of cross sections of a space at high frame rates. The recorded sequence of 2D ‘frames’ will be accumulated into a 3D model, a kind of time-stack, where the 3rd dimension is temporal. The resulting objects will then be realised using computer controlled fabrication techniques to create physical sculptures. This project marks an important development in my practice, a move into object making and a deeper investigation of the relationship between representations of still and moving images and three-dimensional sculpture.

What inspired you to start the project?

What inspired you to start the project?

Daniel Crooks: The project really came out of some of my early video and photographic works. One of the central concerns in my practice is the explicit treatment of time as a physical material and for a long time I’ve been wanting to extend that idea from the screen into physical objects.

Why did you choose the Ars Electronica Futurelab as place for your residency?

Daniel Crooks: The project was always going to be very experimental and also need a fair degree of cross over between hardware research and software development. I knew the futurelab would be able to provide a real diversity of knowledge and the kind of lateral thinking that the project required.

Your main objective of the residency was to develop a 3D slicing camera. Did you reach this goal and if so how did you reach it?

Your main objective of the residency was to develop a 3D slicing camera. Did you reach this goal and if so how did you reach it?

Daniel Crooks: I worked closely with Otto Naderer and we were able to develop a very clear proof of concept. After testing a number of different approaches we were able to obtain some very promising results from the new version of the Kinect camera. Unfortunately these devices are not due for final release until later in the year. However we were able to demonstrate a viable workflow and test a small-scale prototype setup that was capable of generating very useable data.

Otto Naderer: My main field of research in the Ars Electronica Futurelab is Computer Vision and the development of Tracking Systems. In a recent project I’ve used laser ranger devices to reliably track the position / movement of persons in a crowded scenery. Such a sensor measures the distance to the objects surrounding it by emitting a laser pulse and measuring the round trip time. The device provides hence a planar outline of its surroundings at an angle of 270°. Thus, when I heard of Daniel’s idea to develop a slicing camera, laser rangers were the obvious choice.

After having received test equipment from the manufacturer SICK, we consequently began to develop the “Slicer” application that aligns the data from four surrounding laser rangers, computes closed shapes and ‘stacks’ them over time. Implementations of several common 3D model formats allow to easily export the recorded data to 3D modeling software for post-processing.

During the course of the project we started to use Kinect cameras in a similar fashion to the laser rangers by evaluating only a single line in the camera’s depth image – not least to significantly reduce the cost of a possible future reproduction of the setup. At close range we achieved the best results with the upcoming Kinect 2.

What is the outcome of your research?

What is the outcome of your research?

Daniel Crooks: The final outcome will be a series of sculptural works to be exhibited at the Ars Electronica Festival but more importantly the research marks a significant development in my practice from screen-based work into object making

What was the biggest challenge during your research?

Daniel Crooks: The biggest challenge for the project is acquiring the level of resolution or detail that I’m after. The aim is to capture every subtle fold and crumple but realistically capturing that level of detail is still a little way off.

Otto Naderer: Regardless of the hardware used, any sensing device introduces inaccuracy, jitter and also clutter to the system. This can certainly be filtered to some extent though at the expense of spatial and temporal level of detail. On the other side there is of course Daniel’s interest to obtain a smooth but highly detailed surface in the final sculpture. Achieving both best possibly was quite a challenging task.

Do you already have an idea what the topic of your further research will be?

Do you already have an idea what the topic of your further research will be?

Daniel Crooks: I’m working on a number of projects right now but I’m defintiely planning to continue development of the 3D slicing camera. It’s been a long path but I feel like we’re almost there now. The potential for what could be an entirely new kind of sculpture is very exciting.

The 2014 Ars Electronica Festival is set for September 4-8. There a number of three-dimensionally printed work of Daniel Crooks can be visited.